I’m becoming convinced that Jeremy Howard is right to predict that deep learning is going to be “more important and more transformational than the internet.” If you don’t know who Jeremy Howard is, he’s part of the duo behind fast.ai free and high-quality deep learning course series, which is dedicated to making deep learning accessible to everyone.

Deep learning takes advantage of certain graphics processors (GPUs) to be efficient. If you take the course, it’s recommended that you sign up for an Amazon Web Services machine with an appropriate GPU so you can just run the provided setup scripts and be on your way learning deep learning. But you may want to try to get everything set up on your own machine if you happen to have one. I just built a small server and added a modest GPU just for this purpose so I figured I’d give it a whirl. This is how I did it.

Key Specs:

- OS: Ubuntu Linux 17.04

- RAM: 16 GB of RAM (though I filled that up in Lesson 2 and will be ordering 16 more)

- GPU: nVidia GeForce GTX 1060 6GB

I started from this script, provided by fast.ai. I didn’t want to bother with anaconda for Python and just installed the packages globally. If you need multiple environments, just set up virtualenv.

Python libraries

I kind of mixed Ubuntu packages with pip packages for the Python stuff. During the lessons if you find an import that fails, go ahead and install that package as well.

sudo apt install python-sklearn python-matplotlib python-pandas python-pil python-h5py

sudo pip install bcolz theano keras==1.2.2 juypter-notebook kaggle-cli

Nvidia, CUDA, and cuDNN installation

I just installed the proprietary nvidia drivers and cuda stuff right from the ubuntu repos:

sudo apt-get install nvidia-381 nvidia-cuda-dev nvidia-cuda-toolkit nvidia-nsight

Then I signed up to be a Developer Member to get access to the nVidia cuDNN libraries. I downloaded the 16.04 libs and copied them over. I originally tried the cuDNN-7 libs but got scared by the “newer than tested” warning theano gave later (but then Jeremy recommended just not worrying about it so the newer cuDNN libs may work fine).

|

1 2 |

sudo cp cuda-cdnn-5.1/lib64/* /usr/local/cuda/lib64/ sudo cp cuda-cdnn-5.1/include/* /usr/local/cuda/include/ |

I still struggled to get theano imported. I was getting big errors upon testing and finally a dissatisfying message:

|

1 |

$ python -c "import theano"</code> [errors...] collect2: error: ld returned 1 exit status Using gpu device 0: GeForce GTX 1060 6GB (CNMeM is disabled, cuDNN <strong>None</strong>) |

That None was a problem; without cuDNN everything will run on the CPU at infinitely slow speeds. There was a g++/gcc version issue. Installed gcc-4.9 and g++-4.9 and made my .theanorc file say this:

|

1 2 3 4 5 6 7 8 |

[global] device = gpu floatX = float32 cxx=/usr/bin/g++-4.9 [cuda] root = /usr/local/cuda [dnn] enabled = True |

Still no luck. Then, finally, I found that running the following would get it to work.

|

1 2 3 |

$ export LIBRARY_PATH=/usr/local/cuda/lib64 $ python -c "import theano" Using gpu device 0: GeForce GTX 1060 6GB (CNMeM is disabled, cuDNN 5110) |

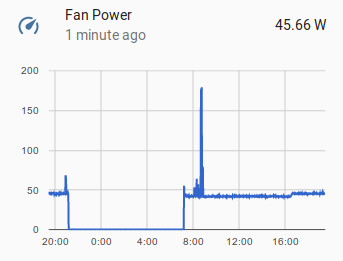

Woohoo! We’re in business. All the notebooks and things worked great after that. In some if you get MemoryErrors, you may have to decrease num_batches from 64 to like 32. When you’re running lesson 1 you can check gpu status with nvidia-smi. My server ramped up from 40W all the way to like 175W under load. Neat!

Deep learning, here I come!

Thank you for this!

Just starting the fast.ai course now, and trying to setup the correct environment on my laptop.